Month: March 2018

All about Spark DataSet API

Dataset API The Dataset API, released as an API preview in Spark 1.6, aims to provide the best of both worlds; the familiar object-oriented programming style and compile-time type-safety of the RDD API but with the performance benefits of the Catalyst query optimizer. Datasets also use the same efficient off-heap storage mechanism as the DataFrame API. When it comes to serializing data, the Dataset API has the concept of encoders which translate between JVM representations (objects) and Spark’s internal binary format. Spark has built-in encoders which are very advanced in that they generate byte code to interact with off-heap data and provide on-demand access to individual attributes without having to de-serialize an entire object. Spark does not yet provide an API for implementing custom ...

Advantages and Downsides of Spark DataFrame API

DataFrame API Spark 1.3 introduced a new DataFrame API as part of the Project Tungsten initiative which seeks to improve the performance and scalability of Spark. The DataFrame API introduces the concept of a schema to describe the data, allowing Spark to manage the schema and only pass data between nodes, in a much more efficient way than using Java serialization. There are also advantages when performing computations in a single process as Spark can serialize the data into off-heap storage in a binary format and then perform many transformations directly on this off-heap memory, avoiding the garbage-collection costs associated with constructing individual objects for each row in the data set. Because Spark understands the schema, there is no need to use Java serialization to encode the d...

Difference between DataFrame and Dataset in Apache Spark

DataFrame Dataset Spark Release Spark 1.3 Spark 1.6 Data Representation A DataFrame is a distributed collection of data organized into named columns. It is conceptually equal to a table in a relational database. It is an extension of DataFrame API that provides the functionality of – type-safe, object-oriented programming interface of the RDD API and performance benefits of the Catalyst query optimizer and off heap storage mechanism of a DataFrame API. Data Formats It can process structured and unstructured data efficiently. It organizes the data into named column. DataFrames allow the Spark to manage schema. It also efficiently processes structured and unstructured data. It represents data in the form of JVM objects of row or a collection of row object. which is represented in tabular for...

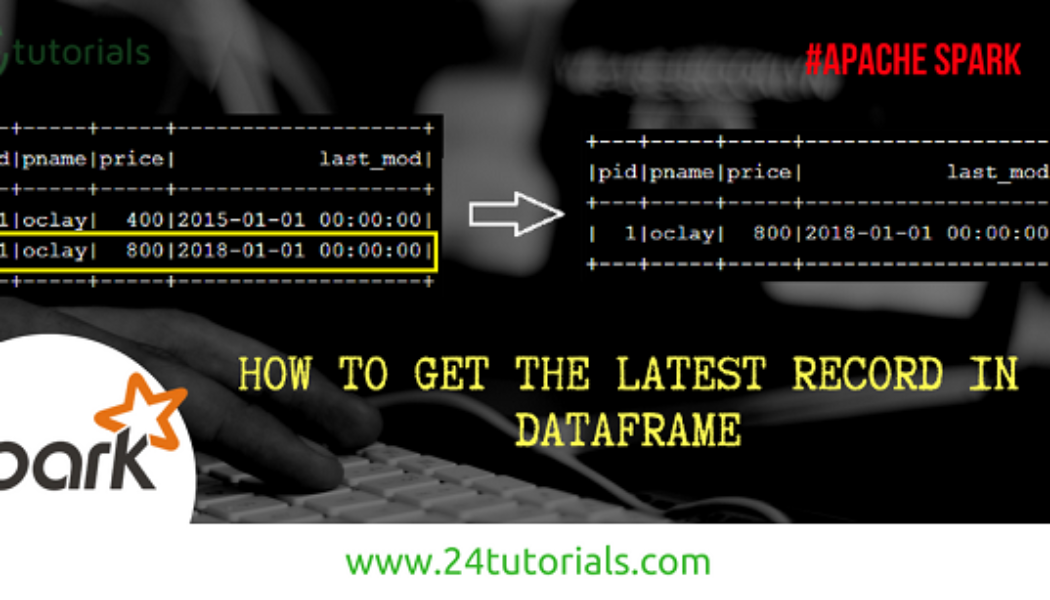

How to get latest record in Spark Dataframe

scala> val inputDF = sc.parallelize(Seq((1,"oclay",400,"2015-01-01 00:00:00"),(1,"oclay",800,"2018-01-01 00:00:00"))).toDF("pid","pname","price","last_mod") scala> inputDF.show +---+-----+-----+-------------------+ |pid|pname|price| last_mod| +---+-----+-----+-------------------+ | 1|oclay| 400|2015-01-01 00:00:00| | 1|oclay| 800|2018-01-01 00:00:00| +---+-----+-----+-------------------+ scala> import org.apache.spark.sql.DataFrame import org.apache.spark.sql.expressions._ import org.apache.spark.sql.functions._ def getLatestRecs(df: DataFrame, partition_col: List[String], sortCols: List[String]): DataFrame = { val part = Window.partitionBy(partition_col.head,partition_col:_*).orderBy(array(sortCols.head,sortCols:_*).desc) val rowDF = df.withColumn("rn", row_number().over(part)) v...

Caching and Persistence – Apache Spark

Caching and Persistence- By default, RDDs are recomputed each time you run an action on them. This can be expensive (in time) if you need to use a dataset more than once. Spark allows you to control what is cached in memory. [code lang=”scala”]val logs: RDD[String] = sc.textFile("/log.txt") val logsWithErrors = logs.filter(_.contains("ERROR”)).persist() val firstnrecords = logsWithErrors.take(10)[/code] Here, we cache logswithErrors in memory. After firstnrecords is computed, Spark will store the contents of firstnrecords for faster access in future operations if we would like to reuse it. [code lang=”scala”]val numErrors = logsWithErrors.count() //faster result[/code] Now, computing the count on logsWithErrors is much faster. There are many ways to c...

Transformation and Actions in Spark

Transformations and Actions – Spark defines transformations and actions on RDDs. Transformations – Return new RDDs as results. They are lazy, Their result RDD is not immediately computed. Actions – Compute a result based on an RDD and either returned or saved to an external storage system (e.g., HDFS). They are eager, their result is immediately computed. Laziness/eagerness is how we can limit network communication using the programming model. Example – Consider the following simple example: val input: List[String] = List(“hi”,”this”,”is”,”example”) val words = sc.parallelize(input) val lengths = words.map(_.length) Nothing happened on the cluster at this point, execution of map(a transformation) is deferred. We need t...

- 1

- 2